The release of GPT-5.3-Codex represents a significant inflection point in the trajectory of large language models (LLMs), moving away from passive pattern recognition toward active architectural contribution. While the broader public discourse often focuses on the conversational nuances of generative AI, the engineering reality of the 5.3-Codex iteration lies in its recursive development cycle. This model was not merely trained on human-authored code; it played a documented role in optimizing the very scripts, data-cleaning pipelines, and loss functions that define its existence. From the perspective of industrial automation and mechanical engineering, this marks the transition from AI as a tool to AI as a foundational layer in the software development lifecycle.

The Architecture of Self-Correction

To understand the technical significance of GPT-5.3-Codex, one must look at the methodology behind its training. Traditional LLM development involves a rigid separation between the model and the developer. Engineers write the code to ingest data, manage weights, and execute backpropagation. In the case of GPT-5.3-Codex, OpenAI implemented a bootstrap mechanism where the predecessor model, GPT-5.2, was tasked with auditing the training codebase for the newer version. This involved refactoring Python and C++ modules to improve computational throughput and identifying bottlenecks in the distributed training environment.

Furthermore, the 5.3-Codex variant introduces a refined attention mechanism that prioritizes long-range dependencies in complex codebases. When dealing with repositories exceeding 100,000 lines of code, standard models often lose track of variable states defined in distant modules. GPT-5.3-Codex utilizes a hierarchical context window that allows it to maintain a semantic map of the entire project structure. This allows for a more deterministic output, reducing the likelihood of "hallucinated" functions that do not exist within the current environment. The result is a model that behaves less like a creative writer and more like a senior systems architect.

Bridging the Gap Between Software and Hardware

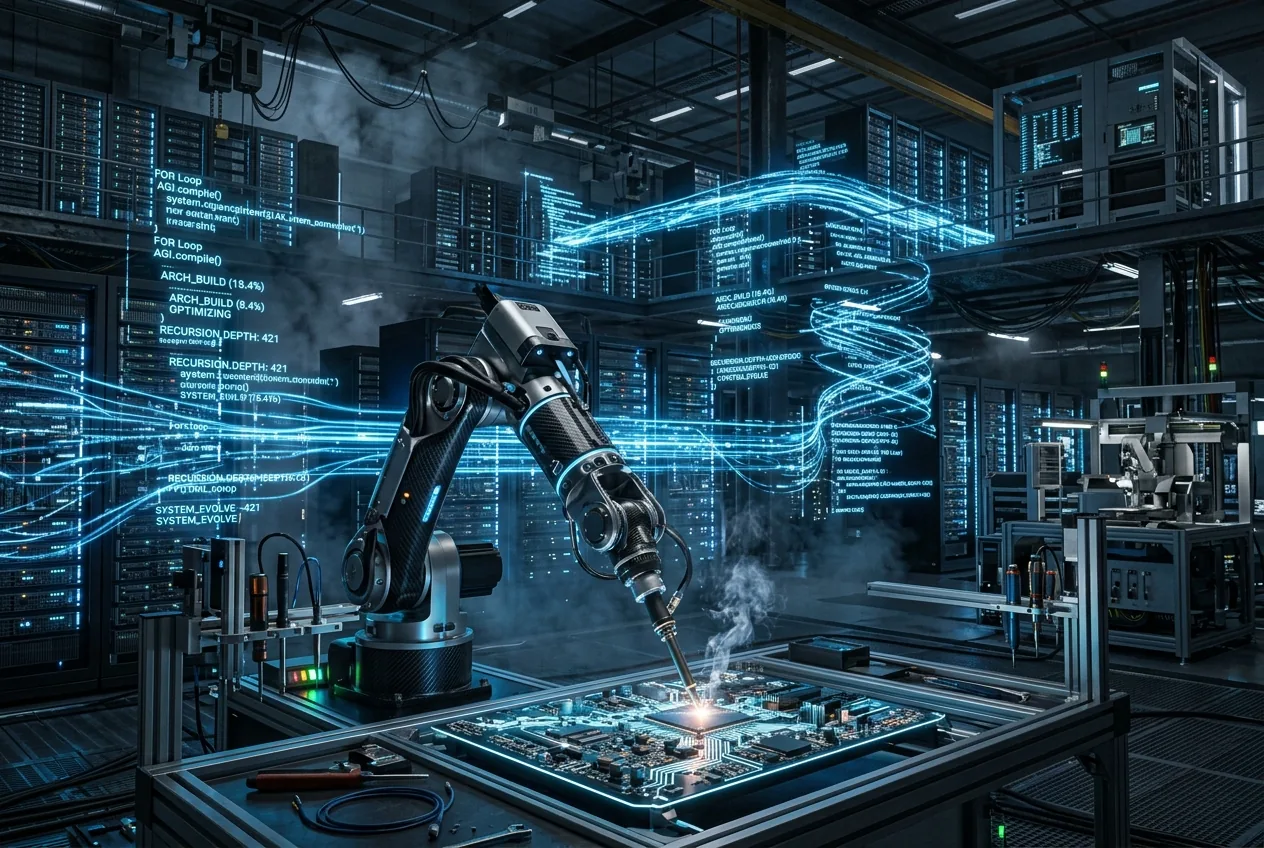

This specialization enables the model to assist in the generation of firmware that is both robust and performant. In a recent test case involving a multi-axis robotic arm, the model was able to generate motor control algorithms that optimized for power consumption without sacrificing torque precision. This was done by integrating physics-based constraints directly into the code generation prompt, a task that GPT-5.3-Codex handles with a high degree of mathematical accuracy. The model essentially acts as a bridge between high-level conceptual design and low-level hardware execution, automating the translation process that historically required a deep expertise in both fields.

The economic implications of this are profound. In the current industrial landscape, the bottleneck for automation is often the time required to write and debug custom code for specific manufacturing tasks. If GPT-5.3-Codex can handle the bulk of the boilerplate and optimization work, the time-to-deployment for new robotic cells could be halved. This increases the viability of automation for small and medium-sized enterprises (SMEs) that lack the capital to maintain large teams of software engineers. We are seeing the democratization of high-end industrial programming through the lens of recursive AI.

How Does Recursive Self-Improvement Impact Safety?

A central debate surrounding the GPT-5.3-Codex release is the safety profile of a model that assists in its own construction. When a model begins to influence its own parameters or training code, the risk of unpredicted emergent behaviors increases. However, OpenAI has integrated a multi-layered verification system that utilizes formal methods—a mathematical approach to verifying that code behaves exactly as intended. This prevents the model from introducing "logic bombs" or security vulnerabilities into the training pipeline during the optimization process.

From an engineering standpoint, this verification layer is the most critical component of the 5.3 architecture. It ensures that while the model can propose optimizations, those optimizations are subjected to rigorous testing against a set of deterministic rules. This is similar to how we treat safety-critical systems in aerospace or automotive engineering. You do not simply trust the algorithm; you trust the verification framework that bounds the algorithm. This pragmatic approach to AI safety moves away from philosophical hand-wringing and toward the implementation of hard constraints and unit tests that ensure the model's output remains within safe operational envelopes.

However, the question remains: could a model eventually optimize its way around its own safety constraints? The current consensus among technical journalists and engineers is that we are still far from that reality. GPT-5.3-Codex is still fundamentally bound by the data it was fed and the loss functions defined by human researchers. Its "self-building" capability is currently restricted to efficiency improvements and code refactoring, rather than a fundamental rewriting of its own objectives. The control remains in the hands of the engineers who oversee the training clusters, providing a necessary check on the model's recursive capabilities.

Economic Viability and the Cost of Intelligence

The industrial sector is notoriously sensitive to the cost of compute. Deploying an LLM for real-time manufacturing oversight requires a massive amount of hardware resources. OpenAI has addressed this by focusing GPT-5.3-Codex on inference efficiency. By pruning redundant pathways in the transformer architecture—a process the model itself assisted in—OpenAI has managed to lower the cost per token for API users while maintaining high performance. This makes it economically feasible to integrate AI-driven code generation into continuous integration and continuous deployment (CI/CD) pipelines.

In a commercial setting, the value proposition of GPT-5.3-Codex lies in its ability to reduce technical debt. For many legacy industries, their software infrastructure is a patchwork of decades-old code that is difficult to maintain. GPT-5.3-Codex can be used to scan these legacy systems, identify inefficiencies, and suggest modern equivalents that are more compatible with current hardware. This refactoring capability represents a massive potential saving in terms of labor and hardware longevity. Instead of replacing an entire system, an engineer can use the model to modernize the existing codebase, extending the life of physical assets through software optimization.

The Future of the Software-Hardware Interface

As we look toward the next iterations of the Codex line, the focus will likely shift from code generation to full-system orchestration. GPT-5.3-Codex has already shown that it can manage the complexities of its own training environment; the next logical step is for such models to manage the complexities of a smart factory or an automated logistics hub. The integration of AI into the very foundation of software development suggests that we are entering an era of "dynamic code," where software evolves in real-time to meet the changing demands of the hardware it controls.

The pragmatic view of this transition is one of cautious optimism. The tools are becoming more powerful, and the barrier to entry for complex automation is falling. However, the responsibility for oversight remains a human endeavor. Engineers must become proficient in auditing AI-generated code, focusing on the higher-level logic and system-wide interactions rather than the minutiae of syntax. GPT-5.3-Codex is a powerful assistant, but its true value is unlocked only when it is directed by those who understand the physical realities of the machines it is meant to serve. In the end, the model that helped build itself is still a tool, albeit the most sophisticated tool the industrial world has ever seen.

Comments

No comments yet. Be the first!